Cheap AI got us hooked — now it wants its Uber moment

Uber was amazing in the mid-2010s, at least in London. As it tried to win market share from traditional taxis and lure customers to its service, prices were incredibly cheap. But behind the scenes, it was losing billions.

This was the era of cheap money, and that enabled a loss-making growth strategy. By splashing the cash, Uber embedded itself through habit and culture – to the point that “getting an Uber” is now a synonym for any taxi app. With customer loyalty established, it cranked the prices up, and many users stuck around. Since 2023, it has reported operating profit every year.

That model – burning investors’ money to establish a product before hiking prices to turn a profit – has been emulated by many companies, but is less attractive in a post-pandemic world of higher interest rates. And that’s a big reason why despite ChatGPT only launching as the first mass-market LLM in 2022, it seems inevitable that AI is about to have its Uber moment.

Tightening the purse strings

Many don’t appreciate how good we’ve had it in the early days of AI. All the major models can be used free of charge, sometimes even without logging in. Premium plans cost around £20 per month, with the all-singing, all-dancing subscriptions around £90. It’s all very reasonable for a product that utilises cutting-edge technology and is hailed as a catalyst for global productivity.

Good things never last, and we’re already seeing signs that the wind is changing. In recent months, there have been many headlines about rising prices and tightened rate limits – one way or another making AI more costly to the end user. And we’ve seen this from many of the major players:

-

In March, ChatGPT boss Nick Turley said he expects pricing to “significantly evolve”, and that an unlimited plan “just doesn’t make sense” – likening it to having an unlimited electricity tariff.

-

Anthropic has quietly shifted Claude enterprise customers to a new pricing model on renewal, moving from seat-based to usage-based pricing. It also briefly removed Claude Code from Pro plan materials, before reverting changes to its website and documentation.

-

Meanwhile, Microsoft is reportedly plotting to move GitHub Copilot users from request- to token-based billing – meaning they’ll pay for the content of their requests, rather than the number they make.

While the companies all have slightly different approaches, the message is clear: The era of cheap AI access is ending, and before too long the price you pay will be tied much more closely to what it costs to operate these models.

This was reflected in a recent Forrester blog post that warned technology leaders that AI costs “will only go up”. However, rather than reducing their reliance on LLMs, its conclusion was that CIOs needed to renegotiate terms with the wider business, billing other departments for usage. As one quoted executive put it, the business must “own AI success while IT supports it”.

A predictable trend

From the consumer end, this looks like enshittification – you’re much more likely to be gated in your use of a product that previously let you do more. However, the circumstances are slightly more justifiable. The AI companies are attempting to avoid burning billions more dollars in operating costs, rather than trying to squeeze everything they can from their customers. There are a few factors that made these moves rather predictable:

-

Companies want profit. The major providers don’t expect to be profitable until at least 2028, and historically their burn rate has been alarming. While their accuracy is contested, a Forbes article quoted internal Cursor calculations suggesting a user with a $200 Claude subscription could use thousands of dollars of compute. It might not be that bad, but users are certainly getting more than they pay for.

-

Models are getting more demanding. AI companies need to keep up with the curve as models improve. That means not only research and development costs, but also all the high-speed memory, GPUs, and other hardware required to support them. The industry’s recent hunger for memory is such that prices have been driven up even for consumer-grade gear used for productivity and gaming.

-

AI has (arguably) demonstrated its value. Much like Uber had to convince users it was a viable alternative to taxis, AI needed to prove it had a place in business. A debate rages about how effective AI really is, but what can’t be denied is that AI rhetoric has convinced leaders, vendors, and consumers alike that AI is needed in their work.

-

Unlimited investment can’t last forever. It’s a tighter market than we’ve seen for a long time, and three years have passed since LLMs were delivered to the mainstream via ChatGPT’s public launch. The product has improved substantially since, and investors won’t want to subsidise it indefinitely. Now habits have been established and people are using AI daily, they’ll want to see returns.

To some extent, more significant price increases are a matter of who blinks first. AI providers will know that the first to charge their models’ true worth risks losing market share to competitors, which locks them in something of a stand-off. But much like current funding levels, it won’t last forever.

Inching towards AGI?

A bizarre subnote to this discussion is that the definition of artificial general intelligence (AGI) used by Microsoft and OpenAI is not based on the models themselves, but on the money that they earn. Their agreement defines AGI as an AI system that can generate at least $100 billion in profit – theoretically, according to their rulebook, if users stick around as prices increase then AI is proving its economic value and we're moving closer to AGI.

AI as an embedded risk

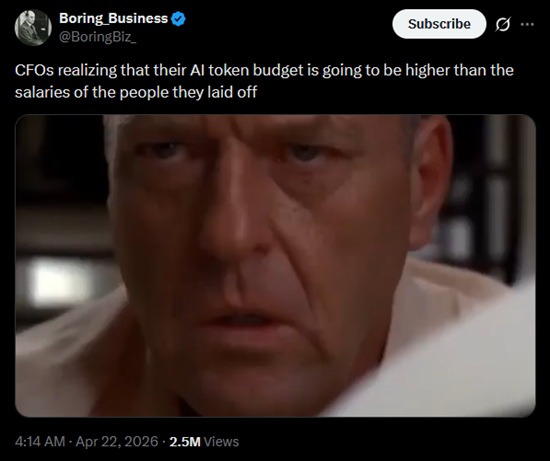

Imagine you’re a leader at a technology company. You saw the AI hype, tried it out yourself, and the output looked good. You instructed your employees to use AI and your developers’ productivity spiked. Claude Code became a core part of your development workflow, and you downsized your workforce to match. The AI rollout has played out exactly as you wanted it to.

But there is a risk that the cost-benefit equation changes drastically as AI costs increase – either in the form of price hikes for what you’re already using, or tier upgrades made necessary by tighter rate limits. Suddenly, you’re looking at an AI bill higher than what you were paying all those humans a few years ago. AI’s value proposition has changed overnight.

Much like those Uber users in the early 2020s, by now you can’t imagine a world without AI. It’s embedded in your core processes and products. You’ve promised “AI-powered” features to customers. You’ve cut back on headcount. Ripping AI out would be a long, disruptive project, requiring you to backtrack on things you confidently declared mere months ago. Either due to practical necessity, contractual obligation, or ego, you can’t shake the soaring costs.

That’s the risk here – that businesses that have gone all-in on AI get caught out as the costs increase to match what the services cost to run. As with any technology, it’s worth investing some time in business continuity planning in case your teams are forced to cut their token consumption one day. Maybe you could even generate a plan with an LLM while they’re still cheap.