Nvidia's DLSS 5 swaps artistic vision for AI guesswork

It’s said that AI will bring about the era of taste as a differentiator. When production is fully democratised, anybody can generate what they envision without a huge team to support them, the playing field will finally level out, and the products that rise to the top will be the most refined and artistic.

The fifth iteration of Nvidia’s Deep Learning Super Sampling (DLSS), shown off this week, clashes with this vision more severely than nearly any other tool that companies dabbling in AI have developed in the last few years.

The best thing since ray tracing?

As Nvidia’s self-heralded “GPT moment for graphics”, DLSS 5 essentially redraws each frame of gameplay with the help of AI, theoretically increasing the level of detail in the scene without any extra effort from developers.

“DLSS 5 takes a game’s color and motion vectors for each frame as input, and uses an AI model to infuse the scene with photoreal lighting and materials that are anchored to source 3D content and consistent from frame to frame. DLSS 5 runs in real time at up to 4K resolution for smooth, interactive gameplay.”

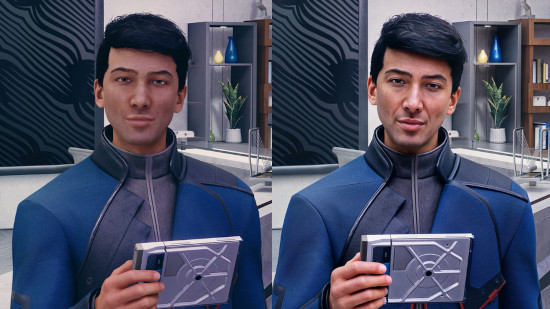

The launch included before and after shots from games like Resident Evil Requiem, EA FC, and Starfield. There are many noticeable improvements, especially in areas that have traditionally challenged game developers – details on clothes, strands of hair, and so on. The faces in Starfield, whose graphics were criticised on its 2023 release, look a thousand times better.

Even at a visual level, there are clear issues. In some of the shots from EA FC, the model was clearly confused by players overlapping at high speeds, and strange artefacts glitch in and out of existence. Hogwarts Legacy loses much of its visual style, its rustic tone cast aside in favour of more washed-out colours – likely the model’s attempt at an upgrade to hyperrealism. While characters’ features are sharper, some don’t look like the same people.

The performance tax

But the real problems with DLSS 5 go beyond the graphics themselves. Nvidia obviously has an interest in pushing AI-grade hardware onto gamers, but high-end graphics cards are out of reach for the majority, running into the thousands of pounds. Most are making do with what they already have.

Processing power is also a budget that must be closely monitored in modern gaming. Optimisation is a lost art, and games that look marginally better than they did ten years ago struggle to hit decent frame rates, even on respectable hardware. For now DLSS 5 is a high-end feature, but is Nvidia’s vision that sometime in the future we’ll all waste valuable resources on having AI guess what games should look like, sacrificing performance?

The hidden cost

Even for DLSS 5 enthusiasts, there was a concerning note hidden in Nvidia's FAQ: "The DLSS 5 early preview demo shown at GTC is run on two GeForce RTX 5090s. One RTX 5090 is dedicated to rendering the game while the other is dedicated for running the DLSS 5 model. DLSS 5 will be optimised to run on a single GPU for release." That's right – you currently can't even play a game with DLSS 5 on a single top-of-the-range graphics card. While Nvidia promises to optimise it for release, it's hard to imagine it wouldn't hugely impact performance on a single card.

But perhaps we could live with all that. My biggest issue with DLSS 5 is the guessing itself. By attempting to fill in the gaps, the model detracts from the art of games and their creators’ vision. Just as some things are left unsaid in great literature, some things are deliberately left dark or undefined in games. Not every title aims for hyperrealism, but Nvidia’s demo suggests that DLSS 5 makes the assumption that everything should be bright and photorealistic.

Leaning on the hardware

I also worry about money-grabbing publishers who will force dependence on this kind of technology. Why should developers waste time crafting graphics when they could slap a minimum requirement for DLSS 5 on the (virtual) box and have the model take care of it? Just throw some rough polygons together and the player’s graphics card will make it look like an AAA game.

The “taste as differentiator” argument assumes that AI handles production of a human vision. Technology like DLSS 5 inverts that – it handles vision too, substituting its own assumptions about what games should look like in place of decisions historically made very deliberately by developers.

And if publishers can ship rough assets knowing that the model will paper over the cracks, the incentive to exercise taste and discernment disappears. The thesis of AI as a creative leveller assumes that the humans in the loop still care about craft and quality. DLSS 5 makes it easier not to.